Progress Report 2010: Difference between revisions

| Line 102: | Line 102: | ||

*Iterate through the website and produce a list of URLs and their associated name found along with an incrementing counter | *Iterate through the website and produce a list of URLs and their associated name found along with an incrementing counter | ||

UNFINISHED****** | UNFINISHED****** | ||

Revision as of 19:38, 26 May 2010

Due Date

This report is due on the 28th of May, 2010 (Friday, week 11).

Executive Summary

For decades the written code that is linked to the “Summerton Man Mystery” (or Taman Shud Case) has been undecipherable and delivered no solid information about his history or identity. This project aims to bring light to the unsolved mystery by tapping into the wealth of information available on the internet.

Recent collection of random letter samples has been used to reinforce previous claims that the code is unlikely to just be a randomly generated sequence of letters. Along with thorough revision and checking of previous code used to produce results, it has been agreed that the Summerton Man code is most likely initialism.

The code found with the dead man will be used in conjunction with a suitable “Web Crawler” and specific pattern matching algorithms. The aim is to target websites of interest and filter through the raw text available in search of character patterns that are also present in the code. Once some solid results are obtained, frequency analysis will hopefully enable a solid hypothesis on some possibilities in regards to the meaning behind the code.

unfinished***

Aims and Objectives

The aim of the project is to make progression with respect to the Summerton Man Case through use of engineering techniques such as information theory, problem solving, statistics, encryption, decryption and software coding. Through use of such techniques, the results from the previous year’s project will revised and used to solidify the pathway for the rest of the project. Once past results are properly revised the next aim of the project is to attempt to utilise a web crawling device in order to access information stored in raw text on websites of interest. Specifically designed algorithms will then be used on the sourced text in order to search for patterns and reoccurrences that may be of interest or relation to the contents of the Summerton Man Code.

Project Background

Mainly taken from proposal - michael will work on and edit this part

The Case

The project is based upon attempts to discover the identity of a middle aged man discovered dead on a metropolitan beach in South Australia in 1948. With little solid evidence of his death apart from the body itself, the man’s passing has remained a mystery covered in controversy for decades. He was found resting against a rock wall on Somerton Beach opposite a home for crippled children shortly before 6:45 am on the 1st of December in 1948. A post-mortem of the body revealed that there was no possibility that the death was natural due to severe congestion in his organs. The most likely cause of death was decided to be from poison, which in 1994 was uncovered by forensic science to be digitalis.

Among the man’s possessions were cigarettes, matches, a metal comb, chewing gum, a railway ticket to Henley Beach, a bus ticket and a tram ticket. A brown suitcase belonging to the man was later discovered at the Adelaide Railway Station. In it contained various articles of clothing, a screwdriver, a stencilling brush, a table knife, scissors and other such utensils. Authorities found the name “T. Keane” and the unknown man was temporarily identified as a local sailor by the name of Tom Keane. However, this was soon proved to be incorrect and the man’s identity has been a mystery ever since. The Somerton man has since been incorrectly identified as a missing stable hand, a worker on a steamship, and a Swedish man.

A spy theory also began circulating due to the circumstances of the Somerton man’s death. In 1947, The US Army’s Signal Intelligence Service, as part of Operation Venona, discovered that there had been top secret material leaked from Australia’s Department of External Affairs to the Soviet embassy in Canberra. Three months prior to the death of the Somerton man, on the 16th of August 1948, an overdose of digitalis was reported as the cause of death for US Assistant Treasury Secretary Harry Dexter White, who had been accused of Soviet espionage under Operation Venona. Due to the similar causes of their death, the rumours of the unknown man being a Soviet spy began to spread.

By far the most intriguing piece of evidence related to the case was a small piece of paper bearing the words “Tamam Shud”, which translated to “ended” or “finished”. This paper was soon identified as being from the last page of a book of poems called The Rubiayat by Omar Khayyam, a famous Persian poet. After a police announcement was made in search of the missing copy of The Rubiayat a local Glenelg resident came forth baring a copy that he had found in the back seat of his car. It was in the back of this copy of The Rubiayat that the Mystery Code was discovered.

The Code

The code found in the back of The Rubiayat revealed a sequence of 40 – 50 letters. It has been dismissed as unsolvable for some time due to the quality of hand writing and the available quantity of letters. The first character on the first and third line looks like an “M” or “W”, and the fifth line’s first character looks like an “I” or a “V”. The second line is crossed out (and is omitted entirely in previous cracking attempts), and there is an “X” above the “O” on the fourth line. Due to the ambiguity of some of these letters and lines, some possibly wrong assumptions could be made as to what is and isn’t a part of the code.

Professional attempts at unlocking this code were largely limited due to the lack of modern techniques and strategies, because they were carried out decades earlier. When the code was analysed by experts in the Australian Department of Defence in 1978, they made the following statements regarding the code: There are insufficient symbols to provide a pattern. The symbols could be a complex substitute code or the meaningless response to a disturbed mind. It is not possible to provide a satisfactory answer.

Last year’s code cracking project group ran a number of tests to selectively conclude which encryption method was mostly likely used. In their final report, they determined that the Somerton man’s code was not a transposition or substitution cipher. They tested the Playfair Cipher, the Vigenere Cipher and the one-time pad[2]. The group concluded that the code was most likely to be an initialism of a sentence of text and using frequency analysis, determined that the most likely intended sequence of letters in the code was:

Requirements and Specifications

The entire project can be broken into 3 main modules. These are:

- Verification of past results

- Design/utilisation of a web crawler

- Pattern matching algorithm

Each module has a set of requirements specific to its design and application with respect to the project.

Verification Requirements

Software Requirements

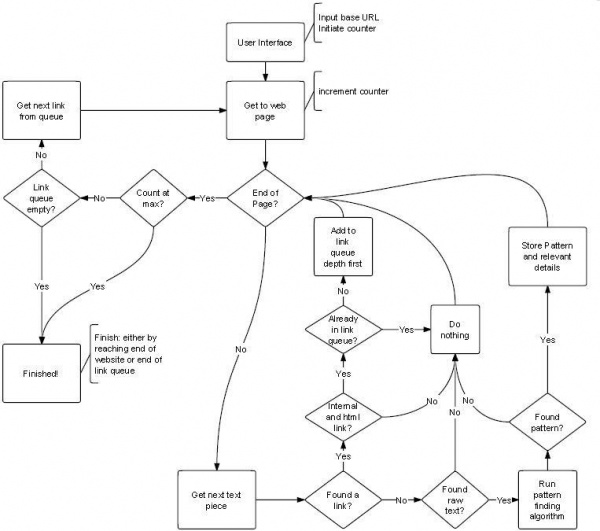

The following block diagram shows the software layout for traversing a website and filtering through raw text and storing all important findings. This method is using a breadth first traversal which is currently being revised and possibly changed to depth first. for more information see the "Web Crawler Plans" section.

Web Crawling Requirements

The web crawling device will be used to visit a website of interest and retrieve data that may contain information relevant to the project. The basic requirements of the web crawler are as follows:

- Take in a base URL from the user

- Traverse the base website

- Pass forward any useful data to the pattern matching algorithm

- Finish after traversing entire website or reaching a specified limit

The crawler will be required to detect and handle the following circumstances:

- Bad I/O

- Found a valid HTML URL

- Found a Non HTML URL

- Found a URL linking to an external site

- Found an Invalid URL

Pattern Matching Algorithm Requirements

Progress So Far

Verification of past results

Text Pattern Algorithms

Web Crawling

An open source Java based Web crawling device called Arachnid has currently been the main focus in terms of producing the web crawler. This crawler is so far proving to be quite useful for the project due to the following traits:

- Java based

- Basic example provided - allowing for reasonably steep learning curve instead of having to become familiar with a crawler from scratch

- Specific Case handling methods provided - methods for some URL cases are provided (with no handling code however it has provided a starting point to the solution)

- Highly Modifiable

So far after modifying the supplied crawler and exploiting methods used in the supplied example the crawler is capable of the following:

- Compiling succesfully (This is a huge bonus! it allows for actual tests to take place)

- Intaking a base URL from the console

- Testing for correct protocol (We only want to look at http websites for now)

- Test for a null URL

- Test for non HTML URLs

- Test for URLs that link to external websites

- Run a handleLink()

- Iterate through the website and produce a list of URLs and their associated name found along with an incrementing counter

UNFINISHED******

Approach for remaining part of the project

Web Crawler Plans

With the web crawler design there is currently two major issues to be resolved.

- Character encoding differences with HTML URLs

- Traversal method - breadth first vs depth first

plans***

Pattern Algorithm Plans

Obtaining and Using Results

sites of interest**

what we will look for**

what is the point of the results?**

Project Management

Project Alterations

As the project has progressed there have been a few things that have changed with respect to the original project plan.

It was originally planned to search the entire internet for patterns of interest. It was quickly realised that this is going to be unachievable within the time frame and resources of this project. The internet is simply too large and changing too quickly for our techniques and equiptment. For this reason the project goal has been down sized to searching through one website at a time. That is, there are now constricitons and limits to the size of the search that the software will be subjected to. The specifications now state that the software will be required to intake a starting URL and search through that website and that website only. This has given some extra challenges such as dealing with URLs that link to external websites however it will also make the project goal much more managable and realistic.

Budget & Resources

Risk Management

changes in risks? anything that has happened

Conclusion

References

See also

- Cipher Cracking 2010

- Final report 2009: Who killed the Somerton man?

- Timeline of the Taman Shud Case

- List of people connected to the Taman Shud Case

- List of facts on the Taman Shud Case that are often misreported

- Structuring the Taman Shud code cracking process

- List of facts we do know about the Somerton Man