Stage 2 Progress Report 2011

Executive Summary

The case of the Somerton Man and the code he left behind has baffled police, the public and cryptanalysts for six decades. This project aims to take advantage of relatively recent advances in technology, including computers and the World Wide Web, as well as utilising the immense amount of data available on the internet to approach the as yet un-deciphered code from a new angle. With the help of modern technology, the project hopes to shed light on what the code could mean.

The project is now 12 weeks into its lifespan and so far significant progress has been made. Cryptanalytical work testing numerous ciphers and statistically analysing documents linked to the code has already been completed. Preliminary designs for a web crawler are currently being carried out, as is early development on the associated pattern matcher. To date, progress is going according to schedule with no major delays or redesigns.

Introduction

This project, ‘Code Cracking: Who murdered the Somerton Man?’ concerns a code found on the body of a man discovered dead on Somerton Beach in 1948. The inspiration for the project is the chance to shed light on what this code could be and what it could mean, taking advantage of technology not available when the case was first investigated in the late 1940’s. However, products created in the course of the project will be designed to have broader uses, outside of their application in examining this case.

Background

The Case

At 6:30am on December 1 1948, a deceased man was discovered on Somerton Beach, resting against a rock wall. The man carried no identification, just some chewing gum, cigarettes, a comb, an unused train ticket and a used bus ticket to a Glenelg bus stop just 250m from the body’s location. The pathologist conducting the autopsy found that while the man’s heart size was normal, the stomach and kidneys were deeply congested and the liver contained excess blood. He concluded that the death was not natural and suggested a poison which he could not identify at the time was the likely cause of death. A review of the case in 1994 concluded that there was little doubt the man died from digitalis poisoning.

The identity of the man was, and still is, a cause of great mystery. He was described as of eastern European appearance, in his mid-40s and in in top physical condition with his hands showing no signs of manual labour. He was clean shaven, dressed in a fashionable European suit and polished boots. All the nametags from his clothing had been removed. No record of his fingerprints or dental structure was found in any international registry. By early February 1949 there had been eight different "positive" identifications of the body by members of the public.

Adding to the mystery was a brown suitcase found at the Adelaide Railway Station which is believed to have belonged to the Somerton Man. It contained shaving items and other tools such as scissors and stencilling equipment as well as additional clothes, some with the label removed and some with a nametag that was never successfully linked to anyone. It is thought that after coming into the city via train overnight, the man checked his suitcase into the railway station cloakroom on the morning of November 30th, before catching a bus to Glenelg and walking to Somerton Beach where he laid down and died some time during the night.

Many theories about the Somerton Man's identity have been developed to complement the mysterious circumstances surrounding the case. A popular theory is that the man was a Soviet spy, perhaps his presence in Adelaide relating to the top-secret missile-launching site located at Woomera. Proponents of this theory point out the nature of the mysterious poisoning death and the fact no one could identify him as key reasons for their belief.[1]

The Code

A tiny piece of paper with the words “Tamam Shud” printed on it was found deep in one of the pockets in the Somerton Man’s clothing. In Persian these words mean “ended” or “finished”. The words were identified as being from the last page of a book containing a collection of Persian poems called “The Rubaiyat of Omar Khayyam”. An Australia-wide search was conducted to find a copy of the book with the “Tamam Shud” segment ripped out of the last page. A man came forward to reveal he had found a copy of the Rubaiyat tossed into the back seat of his car, which was parked in Glenelg on the night of the 30th of November 1948. Tests later confirmed the scrap of paper came from this book.

What was more fascinating was a 5 line marking of a code found in the back of the book. One interpretation of the code is as follows:

No one has yet determined what this code means and deciphering it provides the basis and inspiration for this project.

Technological Advances

The fact that no one has been able to solve the case in over 60 years raises the question: Why do we think we can do it? The answer to this question lies in the technological advances mankind has made in this time period. By far the biggest developments have been the evolution of computers and the introduction of the World Wide Web. The methodology of this project plans to utilise these advances.

The internet makes available vast amounts of data, suggesting the possibility that there is already an answer to the meaning of the code out there; it just has not been found. The project aims to create web crawling software that is able to search the internet for this answer, which will also require the phenomenal processing power of computers to sort through the data provided by the web crawler.

Previous Studies

The code our project examines involves a 62 year-old cold case murder, and as such, over the years there have been many attempts to shed light the code’s meaning. Of most interest is the analysis performed by Government cryptanalysts in Canberra and two previous projects done by students at the University of Adelaide.

Three conclusions were drawn from the examination of the code by cryptanalysts in the Department of Defence in 1978. These were:

- There are insufficient symbols to provide a pattern

- The symbols may be a complex substitute code or could be the ramblings of a disturbed mind

- It is not possible to provide a satisfactory answer.[2]

While these results are somewhat discouraging, this study was done in 1978 and there have been vast technological improvements since then, of which the 2011 Project team plan to take advantage. Of particular interest are the internet and the data processing power of computers.

It is expected that the University of Adelaide student projects undertaken in the past two years are going to be more useful in providing a starting point for the investigation in 2011.

In 2009, the students concentrated on cryptanalyst techniques, investigating whether the code contained meaning, what language the code was likely to be in, and what sort of cipher could have been used. They made several main conclusions:

- The letters are not random – they mean something; they contain information.

- The code is not a transposition cipher – the letters are not simply shifted in position.

- The results are consistent with an English initialism – the letter distribution is consistent with the letter distribution of the first letter of English words.[3]

The honours students examining the code in 2010 took a slightly different approach. They wrote software to perform text pattern analysis and also attempted to match the code’s letter distribution to a specific type of English text (for example a novel, poem, scientific article or Shakespeare play). While they did not manage to match the code to a specific text type, they gathered significant amounts of pattern-matching data regarding text patterns found in the code. They found their pattern matching results were mostly consistent except for their analysis of the Rubaiyat of Omar Khayyam, the book of poems linked to the case. In the Rubaiyat they found few, if any, matches.[4]

The 2010 analysis also proposed to take advantage of the vast quantities of information available on the internet to search for patterns in the code. To do this, they created a simple web application and pattern matcher that could download a website’s contents and screen for patterns. In 2011 extensions to the operation is planned. The aim is to produce a web-crawling application that can autonomously browse internet websites and perform pattern-matching analysis. Progress to date can be seen later in this report.

Something else both previous Adelaide University studies worked on was a Cipher Cross-Off list. This involved a list of ciphers that were identified as potentially being used in creating the code. The purpose of this list was to test individual ciphers on the list and attempt to rule them out (cross them off). This cross-off list is something we have continued as will be discussed further on.

Project Objectives

There are several broad-ranging objectives we plan to achieve in order to make our project successful and these are listed and explained below.

- Use cryptanalytic methods to analyse the Somerton Man Code in order to rule out as many ciphers and theories as possible.

- Create generally applicable Web-Crawling Pattern-Matcher that is able to search the internet for patterns.

- Crack the Code and Solve the Case???

The first objective (cryptanalytic examination of the Somerton Man code) involves two main areas; the Cipher Cross-off List and analysing documents of interest. The aim of the Cipher Cross-off List, is to methodically test and eliminate possible cipher techniques used to generate the code. In terms of documents of interest, noting that the book of poems, the Rubaiyat of Omar Khayyam, has close links to the case, the aim is to statistically analyse the text to determine if there are any significant links to the Somerton Man code.

The second objective, extending on last year’s web pattern-matching application, is to provide an ability to analyse vast amounts of data from numerous web sources autonomously. This will be achieved by building and integrating a web-crawler and pattern-matcher. The purpose of this is not only to try to shed light on the Somerton Man’s code, but to also provide a generally useful application that can accept a pattern or word stub and crawl the web for searching for matches.

Finally, the objective of cracking the code and solving the case could be included. However, in over 6 decades of attempts the code has not been solved. So while the aim to shed light on the meaning of the code is present, success of the project is not dependent on the code or case being solved.

Project Breakdown and Requirements

Project Breakdown Overview

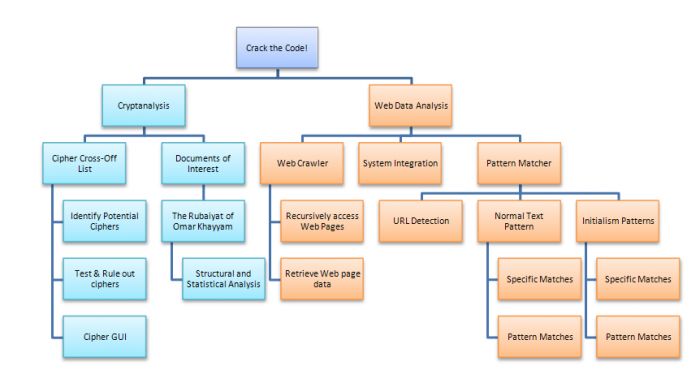

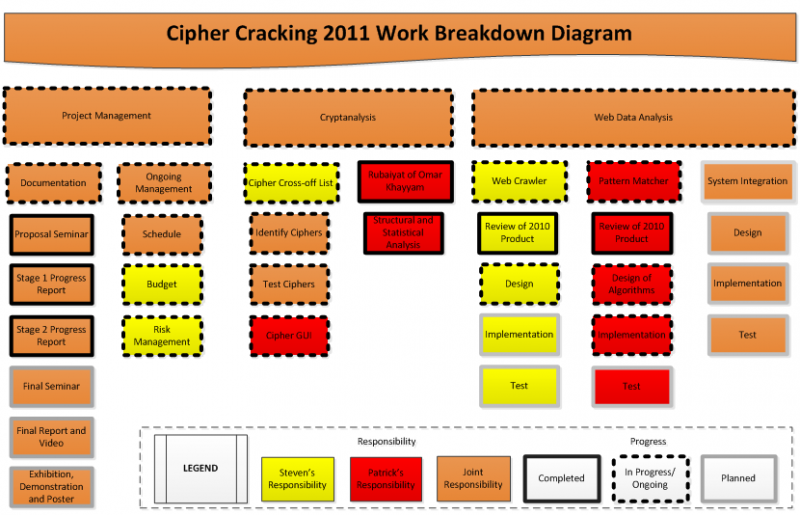

In order to achieve the objectives set out, above, the project has been broken down along the two main lines: Cryptanalysis and Web Data Analysis. The breakdown is shown diagrammatically below.

As discussed in the Project Objectives section, the cryptanalysis section comprises of work on the Cipher Cross-off List involving identifying and testing ciphers. However, since the Stage 1 Progress Report submitted in Week 5, another task has been identified in relation to the cross-off list. This is the Cipher GUI, in which a GUI will be created to utilise the code produced to test ciphers from the cross-off list. The purpose of the GUI is to provide a user friendly tool with developmental features that can be used to decrypt and encrypt text in a variety of cipher systems and analyse the effects of the text transformation. The other section under cryptanalysis, as identified in the Project Objectives, is the structural and statistical analysis of the document of interest; the Rubaiyat of Omar Khayyam.

The other arm of the project, Web Data Analysis, breaks the task of creating a web crawler that can pattern match down into three sections: the web crawler, the pattern matcher and system integration. The technical details for the web crawler and pattern matcher are discussed below.

Technical Designs and Requirements

Web Crawler and Pattern Matcher System Design

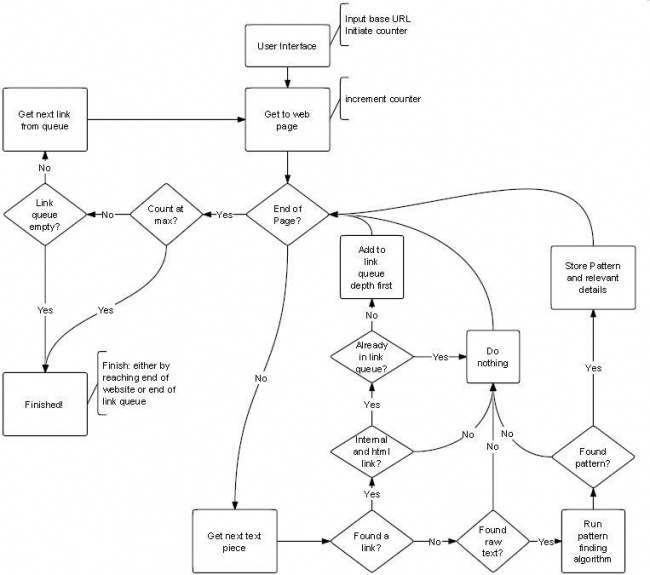

The project plan for the Web Crawler and Pattern Matcher is to design, code then test them separately before integrating them into a web-crawling pattern-matching application. The flowchart below shows a high level representation of the system.

Web Crawler Requirements and Implementation

The web crawler is to autonomously browse websites, downloading data. The requirements and planned implementation process for the web crawler are specified below.

Requirements

- Accept a URL or list of URLs in a text file as starting points from which to crawl.

- Practice “Friendly web crawling” by downloading the robots.txt file from each site and obeying the web crawling restrictions set out in the file.

- Appropriately handle errors such as Web Page does not exist. Should also be flexible enough to handle syntax errors in coding.

- Should be able to read attached documents, including MS Word documents and .txt documents.

- Must not revisit the same site multiple times unless new content has been uploaded.

- Avoid infinite cycles such as when a page references itself.

- Crawl as efficiently as possible and avoid downloading duplicate data.

Implementation Process

- Evaluation of previous (2010) efforts

- Research and evaluation of Web Crawler implementation options

- Implementation of identified Web Crawler solution

- Test and Verification of Web Crawler

Pattern Matcher Requirements and Implementation

The pattern matcher is to parse the output data from the web crawler and detect any pattern matches. The requirements and planned implementation process are specified below.

Requirements

- Should ignore html code and only parse content

- Detect encoded URL links and supply these back to the web crawler. This includes taking into account the different formatting URLs can be encoded in.

- Must provide a flexible pattern matching service, disregarding punctuation, upper/lower case etc.

- Must have an option to pattern-match data as whole words or as initials of words (as previous studies have suggested the code is an initialism).

- Detect both exact string matches and pattern matches.

- Should provide a wildcard option, where an arbitrary letter can be assigned.

- Results should be stored in a results text file, with details including: Continuous or Initials match; Exact or Pattern match; website found on; line found in.

Implementation Process

- Evaluation of previous efforts at pattern matcher code

- Design pattern matching algorithms

- Implement pattern matching algorithms

- Test and Verification of Pattern Matcher

- (Extension) GUI Interface development for pattern matcher (if time permits).

System Integration

Once the web crawler and pattern matcher are implemented and tested, they will need to be integrated into a complete web-crawling pattern-matching software. The requirements and implementation process are given below.

Requirements

- Web Crawler passes web page contents to Pattern Matcher

- Pattern Matcher returns any contained URLs to Web Crawler

- Integrated system autonomously browses multiple web pages and successfully provides pattern-matching results.

Implementation Process

- Design of Interface between Web Crawler and Pattern Matcher

- Implementation of interface between Web Crawler and Pattern Matcher

- Test and Verification of Integrated System

Software Requirements

For the purposes of compatibility, ease of development and testing, and the flexibility of the code, software requirements have been set out which all software must abide by.

- Code should be neat and well commented

- Code should be designed in a logical and modular manner

- All code is to be in Java

Work Completed to Date

Structural and Statistical Analysis of the Rubaiyat

Given the close links between the Rubaiyat of Omar Khayyam and the Somerton Man case and code, there remains suspicion that the code is somehow linked to the contents of the book. This theory has been investigated via statistical and structural analysis of the Rubaiyat.

This analysis tested three hypotheses, listed below:

- The code is an initialism of a poem in the Rubaiyat

- Based on previous studies indicating an English initialism and the fact the code has four lines, with each poem being a quatrain (four line poem).

- The code is related to the initial letters of each word, line or poem

- Based on previous studies indicating an English initialism.

- The code is generally related to text in the Rubaiyat

- Based on the links between the Rubaiyat and the code.

Results of Tests

Hypothesis 1: The code is an initialism of a poem.

This hypothesis was tested by creating a Java text parser to scan through all poems contained in the Rubaiyat, gathering statistics on the number of words in each line (first, second, third, fourth) of each poem. The statistics gathered include the mean number of words in each line, the standard deviation, the maximum number of words in a line and the minimum. The results are shown in the table below, followed by the statistics from the Somerton Man’s code.

| Line | Mean | Std Dev | Max | Min |

| First | 8.00 | 1.15 | 10 | 5 |

| Second | 7.69 | 1.20 | 10 | 5 |

| Third | 7.88 | 1.06 | 10 | 5 |

| Fourth | 7.87 | 1.31 | 10 | 5 |

| Line | Number of Letters |

| First | 9 |

| Second | 11 |

| Third | 11 |

| Fourth | 13 |

The important result that stands out is the maximum number of words in the poem lines. Each line category has a maximum number of words of 10 across all of the 75 poems contained in the Rubaiyat. However, the code has 11, 11 and 13 letters in its second, third and fourth lines respectively, each over the maximum. These results allow Hypothesis 1 to be ruled out, giving the conclusion that the code is not an initialism of a Rubaiyat poem.

Hypothesis 2: The code is related to the initial letters of each word, line or poem.

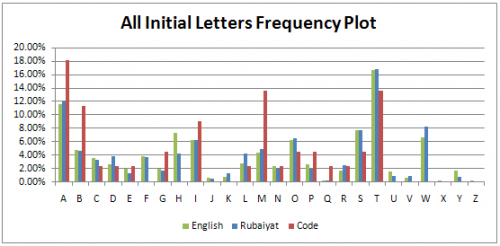

To test this hypothesis, Java text parser code was designed to extract the initial letters of each word, line and poem in the Rubaiyat and generate letter frequency results. The plots and discussions are given below.

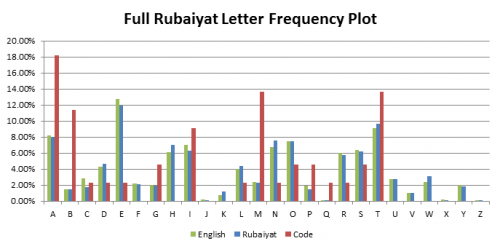

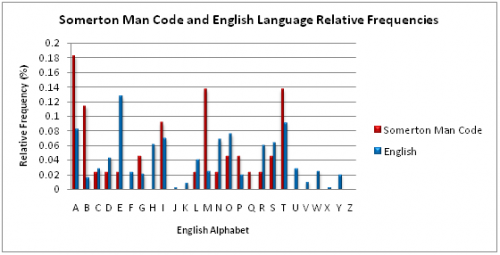

The frequency plot of the initial letters of all words within the Rubaiyat is shown alongside the letter frequency plots of initial letters in the English language and the letters of the code. The results show a generally good correlation between English initials and initials in the Rubaiyat as might be expected, however there are discrepancies when compared to the code, including the code clearly having a greater proportion of A’s, B’s and M’s. While a link cannot be ruled out due to the small sample size of the code, it appears that the code is not related to the letter frequency plot of all letters in the Rubaiyat poems.

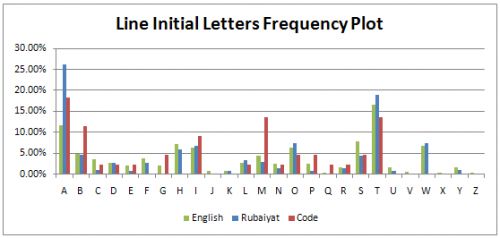

The letter frequency plot of initial letters of poem lines in the Rubaiyat gives a clear result. There is at least one ‘G’ in the code, however, not one line starts with a word beginning with ‘G’. Therefore a link between the code and the initial line letters can be discounted.

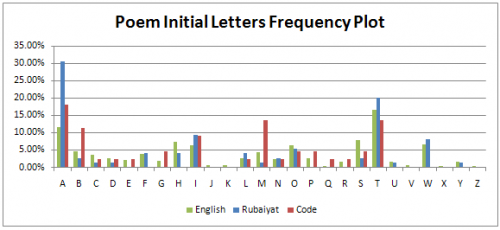

Similar to the initial letters of lines, a relationship between the code and initial letters of Rubaiyat poems can also be ruled out. One reason can again be explained by the presence of a ‘G’ in the code while there are no poems beginning with ‘G’. This reasoning can also be applied for ‘P’. Similarly, there are six ‘M’s in the code (four if we count the arbitrary M’s as W’s) however there is only one poem beginning with ‘M’.

Hypothesis 3: The code is generally related to the text in the Rubaiyat.

This hypothesis was tested by adapting the Java text parser code to generate letter frequency plots for the all letters in the Rubaiyat poems. The results are displayed in the graph below.

The results show that, while there is very good correlation between the Rubaiyat poems and English text in general, the letter frequency of the code is very different, with significantly larger proportions of M’s, A’s and B’s. While the sample size of 44 letters for the code is not enough to completely rule out the possibility, the results indicate that Hypothesis 3 can be ruled out.

Pattern Matcher

Review of 2010 Pattern Matching Code

As part of the design process for the pattern matching code, a review of the pattern matching code written for the 2010 project has been conducted. The purpose of this review was to identify the key design goals of the 2010 code, examine the code implementations, identify areas that could be transferred over to our 2011 code and to pinpoint areas that can be improved on. An internal document has been produced providing this review and is summarised below.

The 2010 code had three main functions:

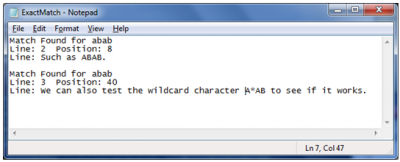

- FindExact – to find exact pattern matches, such as “ABAB”. Also included a wildcard feature in which an arbitrary letter in the middle of a pattern could be symbolised by a wildcard character.

- FindInitialism – to find exact pattern matches for initials of words.

- FindPattern – to find general patterns such as “@#@#” which would detect among others “ABAB” and “DHDH”.

The 2011 code will be designed to have the same functionality. The improvements will come from increased modularity and flexibility in code design enabling more efficient algorithms and more reuse of code.

To achieve this improvement in flexibility and modularity, several areas have been identified as requiring significant re-work. These are:

- Separate the Initialism code from Pattern Matching code. This will enable greater code reuse when looking at examining both continuous and initialism pattern matches. For example, the same FindExact function can be called for FindInitialism except for finding the initialism the input will first be put through an initialising function.

- Redesign FindPattern. The 2010 implementation of FindPattern consisted of exhaustively searching for patterns that had been identified as being in the Somerton Man code. We plan our 2011 code to have greater flexibility, able to search for any patterns based on an input parameter. This will require smarter algorithms and a complete redesign of the function.

- Improve input flexibility. Given final design decisions for the Web Crawler, such as how it will output data, are yet to be decided, the input for pattern matching code needs to be flexible and adaptable. This will be done by calling the pattern-matching functions on Strings as opposed to entire files as in the 2010 implementation.

In summary, a review of the 2010 pattern matching code has been conducted to aid in the development of the 2011 implementation. Areas of improvement have been identified with the aim of improving the flexibility and modularity of the code, enabling more efficient code and greater flexibility in its use.

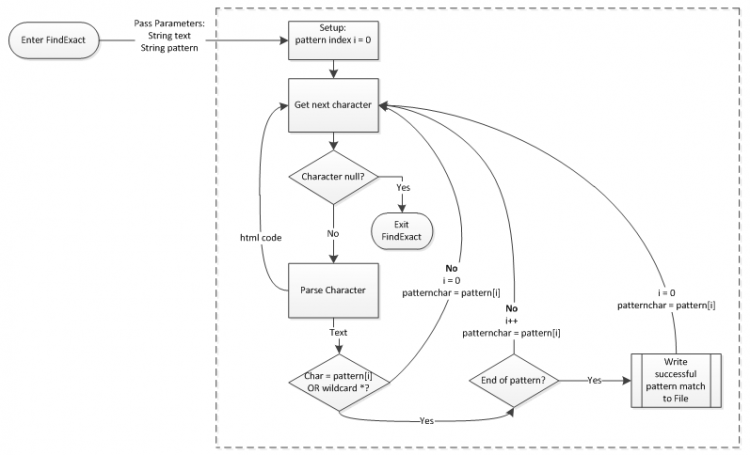

Implementation of Pattern Matcher

Following the review of the 2010 pattern matching code, the development of the 2011 pattern matcher implementation has begun. The pattern matcher is currently still in its early phases of development but is on track as per the Gantt chart schedule.

Current progress has seen the implementation of a FindExact function to detect exact pattern matches in text. The results are currently printed to file and a screenshot of a test is shown below. This implementation of FindExact also allows for wildcard characters. As decided from the review of the 2010 code, the flexibility of the code has been a key design aim. The input is accepted in form of a String as interface design decisions between the pattern matcher and web crawler are yet to be made; pending web crawler research. The flexibility to enable the pattern-matching of initials from a String has also been incorporated in the design.

The implementation of the FindExact function is described in the flowchart below.

Web Crawler

Review of 2010 Web Crawler Implementation

As part of the design process for the web crawler, a review of the web crawlers investigated and used for the 2010 project has been conducted. The purpose of this review was to identify strengths and weaknesses of crawlers investigated last year to see if any would meet our requirements. A summary of the review findings are included below.

In 2010, the primary focus of the web crawler implementation was adaptability to pattern matching software. Throughout the project’s lifecycle several different implementations were considered, each not without compromise. The final solution used the open source program HTTrack Website Copier which created structured local copies of the destination websites for the pattern matcher programs to use. Review of the 2010 work was the beginning of the 2011 web crawler investigation and the following section is a review of the findings.

Initially the consideration in 2010 was to have an internet active crawler. The group identified the following requirements as important in their implementation. These attributes were to be operational in all common URL circumstances.

- Take in a base URL from the user

- Traverse the base website

- Pass forward any useful data to the pattern matching algorithm

- Finish after traversing entire website or reaching a specified limit

The first option explored was the Arachnid Web Spider. Early indicators seemed promising with its Java based design easily adaptable to the pattern matching software. Further testing however highlighted an inability to operate with a common character encoding format. Verification of this finding was completed by the 2011 development team and a description is included below. After alterations could not be implemented to function with the problematic character encoding, the 2010 group decided to abandon the Arachnid Web Spider and seek a more suitable alternative.

Failing to find another suitable internet active crawler, the focus shifted towards programs that could achieve similar behaviour locally. The free webpage extraction tool Wget was the first to be considered. Wget was implemented in the C programming language and its open methodology presented opportunities for use with the pattern matcher software. But while early experimentation was again promising, a compatibility issue between the Java based pattern matching software and the C based Wget software could not be resolved. The critical decision was made to abandon Wget in search of a Java based variant.

The open source program HTTrack was deemed to be the worthy replacement. Its implementation in Java nullified any potential compatibility issues with the pattern matching software and other manual controls ensured all specified requirements could be met. The ability to restrain search depth was seen to be particularly valuable. The final 2010 web crawler implementation used HTTrack and the behaviour is best summarised by the following flow chart. The flow chart also shows where the pattern matcher software is required within the web crawler’s operation.

The crawler begins by retrieving a list of pre-specified URLs that have been saved in a text file within a particular computer directory. HTTrack mirrors the specified websites into a structural hierarchy on the computer in a location specified by the user. The pattern matcher software concludes operation by analysing the stored websites.

Results of the Review

All the software chronicled in the 2010 documentation was tested in 2011 as part of this review. The Arachnid Web Spider was the first software studied. As mentioned earlier, the 2010 finding that the spider had an inability to function with select character encoding formats was supported by the tests conducted in 2011. As a consequence it is unlikely the 2011 crawler will make use of the Arachnid Web Spider.

Following the 2010 investigative path the focus shifted to the Wget webpage extraction tool. Limited documentation about the implementation of the Wget software made confirmation of last year’s results difficult. They did identify incompatibility between the C based Wget and the Java based pattern matcher software as a concern in a Progress Report. Without specific interaction information this finding was unable to be verified in 2011. Through a comparison of the Wget program with the HTTrack program it was deemed that both programs have many common behavioural characteristics in how webpages are mirrored locally. Thus rather than speculate on Wget attention was shifted to HTTrack.

As part of the final 2010 web crawler implementation, the HTTrack program was thoroughly tested. A working version of the crawler as intended by the 2010 group was observed. The results were verified using the video provided as part of the 2010 Final Report. While the solution met the key requirements as specified by the project team, there is limited opportunity for use in the behavioural expansions planned in 2011. Transportability of the crawler between web sources is difficult. As although the HTTrack software has the capacity for operating over a larger domain, use with the pattern matching software was restricted to an internal depth of four and an external depth of zero. If operation was to be extended, computer memory usage becomes a concern. These interdependent limitations hinder the web crawler in serving its primary function of data mining for possible solutions to the Somerton Man code.

Test Environment for Web Crawler

In preparation for the development of the web crawler, possible testing environments have been investigated. The purpose of the environment is to provide a safe and simple way in which the web crawler software can be developed, tested and debugged. Web crawlers have complex and intrusive behaviour that can easily get caught in traps or cause problems for the server they are accessing. Thus it is desirable to have a test environment in which testing can be performed without endangering others’ web property.

Research indicated there were two main options available:

- Limiting crawling. Either by:

- Only crawling a certain website (i.e. only crawling pages starting with www.adelaide.edu.au)

- Limit crawling to a certain depth (i.e. only crawl for a set amount of links)

- A closed environment in a local directory.

Of these choices, the closed environment in a local directory was chosen as the superior option because it provides greater control over the webpage content and the closed environment means there is no chance of interfering with other people’s internet property if the crawler doesn’t behave as expected. One disadvantage is it requires development of html code to generate the closed environment for testing. This is far outweighed by the benefit of additional control over the testing domain.

To date, we have written a simple html file system consisting of several pages which contain both information content and links to one another. No further work is planned on the file system until the web crawler is ready for test.

Cipher Cross-off List

Previous Adelaide University studies into the Somerton Man’s code have concluded that the mysterious code left behind is not just random letters; it is in fact a code. This raises the question: What mechanism was used to generate the code? In attempt to answer this question, the Cipher Cross-off list was created. The Cipher Cross-off List is a list of identified cipher techniques that were prevalent at the time of the Somerton Man murder. The purpose of the list is to methodically test the identified cipher schemes in an attempt to identify the technique most likely to have been used to encrypt the Somerton Man code.

In 2011 the work in this area has led to the creation of an official Cipher Cross-off List, which is available on the project website (or by clicking here).

Both project team members have assisted with the progress of the Cipher Cross-off List. Thus far, the goal has been for each team member to test one cipher per week. The following ciphers have already been crossed off the list in 2011:

- Alphabet Reversal Cipher (Patrick)

- ADFGVX Cipher (Patrick)

- Affine Cipher (Patrick)

- Two-square Cipher (Patrick)

- Rail Fence Cipher (Patrick)

- Shift Cipher (Steven)

- Auto-Key Cipher (Steven)

- VIC Cipher (Steven)

- Vigenere Cipher (Steven)

- Hill Cipher (Steven)

- Bifid Cipher (Steven)

- Trifid Cipher (Steven)

A brief description of each cipher and the reasoning for being “crossed off” the Cipher Cross-off List follows:

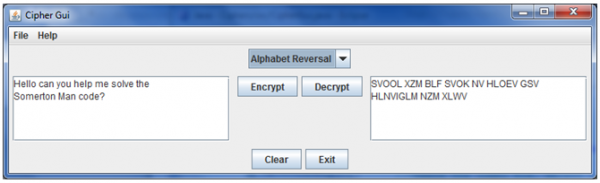

Alphabet Reversal Cipher

The Alphabet Reversal cipher is a substitution cipher where A becomes Z, B becomes Y, C becomes X etc. This leads to the following encoding and decoding key (read in vertical order):

| A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

| Z | Y | X | W | V | U | T | S | R | Q | P | O | N | M | L | K | J | I | H | G | F | E | D | C | B | A |

Thus, for example, "HELLO" becomes "SVOOL". This cipher has been tested on the Tamam Shud code by writing a Java program that takes input from the command line or from a text file and produces output in reversed form. The result of running a file containing the code through the program turns the input of:

Into:

As can be seen, there can be no meaning deciphered from the alphabet-reversed text and thus we have ruled out the Alphabet Reversal Cipher as being used in encrypting the Somerton Man's code.

ADFGVX Cipher

The ADFGVX Cipher was introduced to public knowledge in March 1918. It was used primarily by the German Army during World War One. The technique used for encryption produces a ciphertext that contains only the English letters A, D, F, G, V and X, hence the name. The ADFGVX Cipher uses transposition and substitution with bipartite fractionation. Given the lengthy methodology used for encryption and decryption, full details have not been provided. Further information can be accessed here.

A review of the Somerton Man code showed it contained 16 different English alphabet letters. Thus any cipher methodology that produces a ciphertext with fewer than 16 different letters can be trivially disproven. This includes the ADFGVX Cipher. It was also considered that false letters may have been used to mask the ADFGVX cipher. For this the relative frequency plot for the Somerton Man code was examined. A graph of the relative frequencies of letters in the Somerton Man code compared with the relative English letter frequencies is shown below. There is no evidence that the six letters expected in an ADFGVX ciphertext are more prevalent in the Somerton Man code than in the English language. The ADFGVX Cipher using a weak mask was consequently ruled out of the investigation. Furthermore apart from the letter “A”, the relative frequencies of the six letters is low, three do not even appear. Thus it was also concluded that a strong mask was not used. The ADFGVX was discounted from all further investigation following these results.

Affine Cipher

Like the Shift Cipher, the Affine Cipher is a mono-alphabetic substitution cipher. It is commonly categorised as a block cipher with a length of 1. Each letter of the plaintext is therefore encrypted independently from the other letters.

Encryption method:

Decryption method:

The rules that were examined for encryption and decryption are shown above, where x represents a given plaintext letter and y represents the corresponding ciphertext letter. Given that there was 25 possible values for b within the modulo 26 English Alphabet and 12 possibilities for a, the total number of possible keys for the Affine Cipher was 312. The limitation imposed on a is a consequence of requiring an inverse within the modulo 26 domain.

In 2011, a Java program was written to test all 312 possible key variations. The output from the program has been added to the Cipher Cross-off List wiki page and is available here. The results did not present any understandable text, and as such, the Affine Cipher has been ruled out of any future investigation. The results are available here.

Two-square Cipher

The Two-square Cipher is a digraph cipher - it encrypts letters in pairs. This means that the output code should occur in even numbers. In the case of the Somerton Man's code, the lines consist of 9, 11, 11 and 13 letters; all odd numbers. This would indicate that a simple digraph encryption technique such as the Two-square Cipher has not been used.

Rail Fence Cipher

The Rail Fence Cipher is a geometric cipher. It was identified as a possibility because of the four distinct lines in the Somerton Man code indicating it could be a 4-rail Rail Fence cipher. A Rail Fence Cipher involves writing out the unencrypted message in a zigzag and then reading it in rows to form the encrypted version. For example, take "Rail Fence Cipher" in a 3-rail cipher:

R F E H A L E C C P E I N I R

This example forms the code: RFEH ALECCPE INIR.

We have discounted the Rail Fence Cipher as being used in the Tamam Shud code for several reasons. Firstly, it is simply a transposition cipher and previous studies have shown the letter frequency plot is not consistent with a transposition, indicated by the letter frequency plot and the presence of a 'Q' in the code without a 'U'. The final proof comes from testing the code itself:

M R G O A B A B D M T B I M P A N E T P M L I A B O A I A Q C I T T M T S A M S T G A B

Decrypting this forms: IMMMTLTIBRIATBMGPOMAAONITAEATQSCPBAAMBSDTGAB. There are no recognisable words in this and the top and bottom lines both overflow.

Shift Cipher

The shift cipher is a mono-alphabetic cipher and in the most general description is literally a substitution cipher. Each letter is shifted by the same amount within the alphabet that is used and the modulo operator ensures any shift remains within the alphabet. One implementation of the shift cipher is famously known as the Caesar Cipher, but while the Caesar Cipher only uses one value for the key the following examination explores all available options. The encryption and decryption methodologies are shown below where x represents a given plaintext letter, y represents the corresponding ciphertext letter and k is the key, which is restricted by the size of the alphabet used.

Encryption method:

Decryption method:

The shift cipher has been tested in 2011 with the Java code that was used cycling through all 25 key options within the English Alphabet, reduced by one to remove the zero shift case. The results have been uploaded and can be found here. Since the results show no understandable text, the shift cipher has been removed from future project investigations.

It is worth noting that the results of this test will be confirmed with the future implementation of a testing procedure for the Affine Cipher corresponding to the case of a = 1.

Auto-Key Cipher

The auto-key cipher is a stream cipher. Stream ciphers use a different key for every block as opposed to using the same key repeatedly as is the case with block ciphers. In the case of the Auto-Key cipher, the letters of the message are used as the input key stream apart from the first key letter which is normally chosen. The encryption and decryption methodologies that were tested are shown below where k represents the initial key, xi represents a given plaintext letter and yi represents the corresponding ciphertext letter.

Encryption method:

Decryption method:

Noticeably only addition is specified within the above formula for encryption however the 2011 project team identified that a variation to the methodology could be present. Specifically in place of the addition, subtraction could have been used. Both were considered in the investigation as well as alternation between addition and subtraction.

Furthermore since the input key stream depends on the message being encoded, the line order of the Somerton Man code is also important. Different line orders yield different message text and uncertainty within the code relating to the crossed out line was a concern in testing the Auto-Key methodology. To ensure thoroughness within the investigation different scenarios were considered. These were:

- The original line order as appears under the Alphabet Reversal Cipher section

- Swapping the order of the second and third lines within the original code. This is to consider the circumstance where a mistake was made when generating the code and the third line is a replacement for the crossed out line.

- Each line separately with the same key used each time.

A Java program was written to test each of the above scenarios with all three possible variations of the decryption formula. Examination of the program’s output file by one of the project team members revealed there was no meaningful English text and as a consequence the Auto-Key Cipher has been ruled out as the technique used to generate the code of the Somerton Man. The output file has been uploaded and is available here.

VIC Cipher

The VIC Cipher was a cipher scheme issued by the Soviet Union. The version that was examined in the following investigation was the one adapted to the English language thus coinciding with the Somerton Man code. Further information about implementation of the VIC Cipher is available here. Use of the VIC Cipher to generate the Somerton Man code can be trivially disproved as its formula outputs ciphertext consisting only of numerical blocks of length five while the Somerton Man code contains only letters.

For completeness a conversion between numbers and letters was considered. Two cases were examined. The first that there was a two digit number representing each letter in the code and the second using the conventional representation of Z26 with A = 0, B = 1, etc. Both instances failed to produce a numerical representation that was a factor of five which would be inherent in the use of the VIC Cipher system. The possibility that dummy variables could have been used to pad the size were dismissed as too remote and it was decided no change would be made to the original conclusion that the VIC Cipher was not used.

As mentioned above the VIC Cipher scheme that was investigated was the version adapted to the English language thus there is an opportunity for future exploration of alternative languages.

Vigenere Cipher

Investigation of the Vigenere Cipher scheme in 2009 ruled that it had not been used to produce the code of the Somerton Man. Upon reviewing the findings, the 2011 project team concluded that further enquiries needed to be pursued before the Vigenere Cipher could be dismissed. An additional benefit of this investigation was the opportunity to use results to narrow testing of the Hill Cipher, covered below.

As mentioned in the 2009 Final Report, the Vigenere Cipher is a block cipher with the block length determined by the length of the keyword used. The 2009 investigation considered the keyword “LEMON” and thus their statistical evaluation considered a block length of five. They did not consider if another block length was used. The investigation in 2011 attempted to identify if there were any likely block lengths besides size five for the Somerton Man code and test the Vigenere Cipher in these instances. The block length testing results were not constrained to the Vigenere Cipher alone. As block length was considered statistically, the findings are applicable to any other block cipher mechanism.

The mechanism used to identify likely block lengths was the Index of Coincidence. It is defined as the probability that any two randomly chosen characters within a text (be that plaintext or ciphertext) are the same letter. Calculation of the probability for the modulo 26 case is shown below where fi denotes the number of occurrences of the i-th letter of the input text alphabet and n represents the number of letters in the message.

For a random string of text the Index of Coincidence gives Ic(x) = 26(1/26)2 = 0.038 and for a string of English text Ic(x) ≈ 0.065 is given. By dividing the ciphertext into blocks and calculating the indices of coincidence for each block, they can be compared to the English case. If there is a strong correlation between every block and the value for the English case above, the number of blocks can be seen as a likely block length. For this method to succeed it is critical when the ciphertext is divided into blocks that it is equivalent to writing the ciphertext as columns in a matrix of m rows, where m is the number of blocks. An example of this is shown below for three blocks and the ciphertext “SECRET MESSAGE HIDDEN”.

Block 1 => S R M S E D N

Block 2 => E E E A H D

Block 3 => C T S G I E

For the Somerton Man code the Index of Coincidence method was used to test all possible block lengths. With the code of length 44 this corresponded to the integer set 1 to 44. A MATLAB script was written to automate the testing. The results showed that if a block cipher was used to generate the Somerton Man code, lengths of 3 and 7 were most likely used.

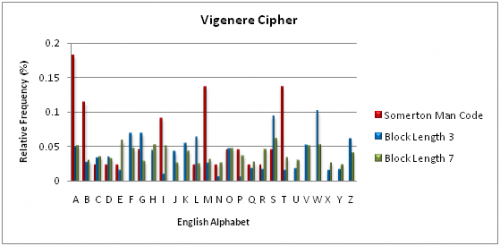

Frequency analysis of the Vigenere Cipher was conducted using both likely block length candidates with code words of “SOS” and “WOOMERA” respectively. For consistency the same English text was used in each instance. A graph comparing the results to the Somerton Man code is shown below.

Comparison between the two Vigenere Ciphers show strong correlation. Only four letters have a respective difference in relative frequency exceeding 50%. Conversely, the Somerton Man code possesses characteristics that differ significantly from both the three letter and seven letter cases. Of the sixteen letters that appear in the Somerton Man code only the letter “o” can be favourably compared to the Vigenere Cases. The remaining fifteen letters do not share consistency.

These results are not completely conclusive given the short length of the Somerton Man code. They are however sufficient for the 2011 project team to remove the Vigenere Cipher from further enquiry.

Hill Cipher

The Hill Cipher was invented by Lester S. Hill in 1929. It is a polygraphic polyalphabetic substitution cipher based on linear algebra. The encryption and decryption methodologies that were tested are defined by the formulas shown below. Encryption method:

Decryption method:

The matrix A is required to be invertible within the alphabet used, for English this is modulo 26.

Given it is a block cipher the results of the Index of Coincidence method were extremely helpful for analysis of the Hill Cipher. The 2011 investigation concluded that since the code was generated by hand, encryption key matrix sizes of 2x2 and 3x3 were most practically feasible. However reflection on the findings of the Index of Coincidence Method showed the most likely block lengths were 3 and 7. A block length of two, corresponding to a 2x2 encryption key matrix, was deemed unlikely. Furthermore a block length of two would yield a digraph cipher, meaning the ciphertext was generated in pairs. The Somerton Man code contains lines consisting of 9, 11, 11 and 13 letters. There are no even numbers of letters on any line. From this and the results of the Index of Coincidence method, it was concluded the Hill Cipher using a dimension two encryption key could be ruled out as the source of the Somerton Man code. As only the first of four lines was a multiple of three, a Hill Cipher using a dimension three encryption key was also ruled out.

Bifid Cipher

The Bifid Cipher was first published in 1901. A Polybius square is used with transposition for fractionation encryption. The fractionation that is achieved gives a dependency of each ciphertext character on two plaintext characters, like in the Playfair cipher assessed in 2009. Further information about the Bifid Cipher methodology can be found here.

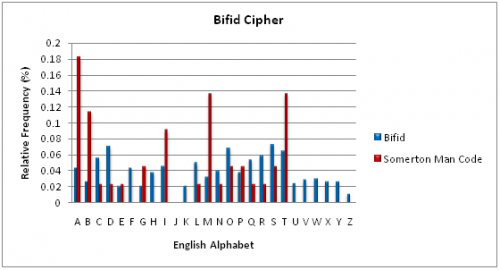

To test the Bifid Cipher mechanism a known plain text was encoded and the resultant ciphertext was letter frequency analysed and compared to the Somerton Man code. A graph of the relative frequency of each English alphabet letter is shown below. The absence of the letter J in the case of the Bifid Cipher results is in accordance with the encryption methodology where the letters “I” and “J” are represented by the former only.

Comparison of the Bifid encryption results with the Somerton Man code shows a weak correlation. The results for the Bifid Cipher case show a distribution between all possible ciphertext letters with a deviation significantly smaller than the Somerton Man code. These results were sufficient to conclude that the Bifid Cipher mechanism had not been used to generate the Somerton Man code. The conclusion is not definitive given the small sample size of the Somerton Man code. An interesting observation is that the letter “J” is absent in both results.

Trifid Cipher

The Trifid Cipher was invented in 1901 following publication of the Bifid Cipher. It extends the Bifid Cipher into a third dimension which consequently achieves fractionation that sees each ciphertext character dependent on three plaintext characters. Further information about the Trifid Cipher and example of the encryption methodology can be found here. As the Trifid Cipher requires 27 ciphertext letters, the full-stop was used for the additional character like in the reference material.

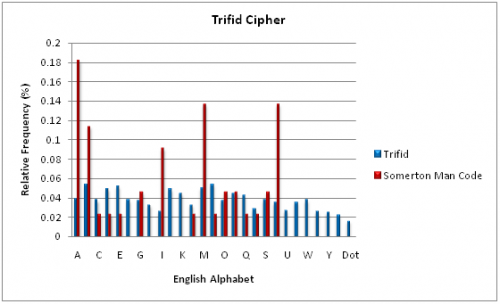

Since the Somerton Man code did not contain any characters beyond the traditional English Alphabet, the Trifid Cipher mechanism could not be trivially discounted. Testing therefore followed the same procedure as the Bifid Cipher; a known plaintext was encoded and the resultant ciphertext was letter frequency analysed and compared to the Somerton Man code. The relative frequency of each English Alphabet letter is shown in the graph below, with the “Dot” letter representing the 27th ciphertext character.

The Trifid Cipher shows an approximately even distribution across all ciphertext letters. The Somerton Man code in comparison is sporadic, with the proportion of letters “A”, “B”, “M” and “T” much larger. From these results it was decided that the Trifid Cipher had not been used to generate the Somerton Man code however as was the case with the Bifid Cipher, the small sample size of the Somerton Man code prevents a definitive conclusion being reached.

Cipher GUI

For the purposes of investigating ciphers for the Cipher Cross-off List, large amounts of code have been written by both project team members with the function of encrypting and decrypting text. Rather than let this code fall into disuse once the tests are complete, creation of a graphical user interface (GUI) has been proposed. The GUI will have a list of ciphers to choose from and the option to encrypt or decrypt user-inputted text. The Cipher GUI will also be designed to have developmental tools integrated into it to assist in the analysis of data encryption results. More information can be see in the Work for Remainder of Project section.

The Cipher GUI is currently in the early stages of development. A prototype implementing the Alphabet Reversal Cipher is shown below.

Work for Remainder of Project

Web Crawler and Pattern Matcher

Web Crawler

Given the complexity involved in web crawlers and the current progress being limited to research of implementation options, there is much work still to do in regards to the web crawler.

Continued work on the web crawler will coincide with the planned schedule. Following the mid-year exam period work on an implementation will commence. This will use the investigation of the 2010 web crawler in addition to the research conducted into potential implementations. An active "well-behaved" web crawler requires behaviour that abides by the “robots.txt” protocol. A protocal that specifies what content web crawlers are able to retrieve from websites. Failure to do so could result in litigation. As such, it is the current intention of the project team to utilise existing software that adheres to “robots.txt”.

Potential software includes the HTTrack program used by the 2010 Project group. The mirroring component of its behaviour is undesirable however since the source code for the program is freely available, the unwanted component of the program can be removed and other behaviour manipulated as required. In addition to HTTrack, other software will be further investigated before a final decision is made.

Compatibility between the web crawler and text analysis algorithms has already been discussed by the 2011 project team to avoid a conflict such as the one that occurred in 2010. Currently there is no concern, however this will be considered when deciding on a web crawler implementation option to ease the integration of the two systems into a single interface.

Pattern Matcher

Of the pattern matching software, to date only the FindExact function has been implemented, as described earlier in the section on Work Completed to Date. This leaves the design, implementation and test of several functions to be completed, including:

- FindPattern, the generic pattern finding application which will search for all patterns of letters. E.g. Search for ‘@#@#’ will return matches for ‘ABAB’, ‘HKHK’, ‘FMFM’ etc.

- FindInitialism (exact), the specific initialism matcher. E.g. a search for ‘ABAB’ would return true for ‘A big Asteroid belt’.

- FindInitialism (pattern), the generic initialism pattern finding application, similar to above. E.g. a search for ‘@#@#’ would match ‘Go Port, Go Power’ as well as ‘Crows suck. Can’t succeed.’

Also yet to be implemented is the function to extricate URLs for recursive web-crawling. This will be implemented during the system integration phase when the interfacing requirements with the web crawler are better understood. It is also possible that the web crawler will provide most of the code needed for this function. However, detection algorithms will need to be flexible to account for the different styles that URLs can be received in. This includes handling whitespace, capital versus lowercase letters, and different quotation marks.[5]

For example, the following two URLs are both valid (and identical).

- <A REF = “http://www.adelaide.edu.au/” >

- <a ref=http://www.adelaide.edu.au/>

System Integration

Following completion and sufficient individual testing of the pattern matcher and web crawler, the two systems will need to be integrated. As detailed in the design diagram above, correct functional interaction between the web crawler and pattern matching algorithms is important. In accordance with the technical requirements it will involve ensuring the web crawler passes its downloaded data to the pattern matcher and the pattern matcher passes URLs to the web crawler's queue.

Cipher Cross-off List

Work on the Cipher Cross-off List is expected to continue until completion of the project; it is an ongoing task as there are many possible ciphers to test. Both project members expect to continue working towards the goal of identifying and testing at least one cipher per week for the remainder of the project, excluding the mid-year exam period.

Cipher GUI

The Cipher GUI is still in the early stages of development. Currently only the Alphabet Reversal Cipher is implemented, leaving many ciphers still to add including but not limited to the Affine cipher, the Vigenere cipher and Caesar cipher.

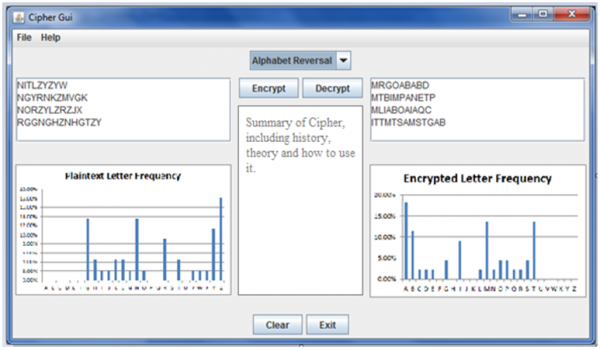

The project team also plan to make some improvements and add additional features to the current prototype shown in the previous section. These include adding:

- Cipher Summary text field. This field is where a brief history of the cipher can be put along with directions for its use.

- Letter Frequency Plots. In order to help see what the cipher does to letter frequencies in changing plaintext to encrypted text, letter frequency plots are planned for both the before (plaintext) and after (encrypted text) text fields.

A graphically enhanced design of the proposed Cipher GUI is shown in the figure below, including both the Cipher Summary text field and the before and after letter frequency plots.

Project Deliverables and Closeout

To ensure satisfactory closeout of the project in 2011 several products and corresponding documentation must be delivered. The products include:

- Integrated web crawling pattern matcher

- The Cipher GUI

- Project Poster

Supporting documentation will include:

- Final project report (due Oct. 21)

- Video on the project (due Oct. 21)

Other presentations are also required, including:

- Final Seminar (due Sept. 30)

- Project Exhibition and Demonstration (due Oct. 28)

The web crawler, pattern matcher and cipher GUI are already under development as summarised in this report. Work on the other deliverables is yet to commence in accordance with the planned schedule included below.

Project Management

Project Schedule

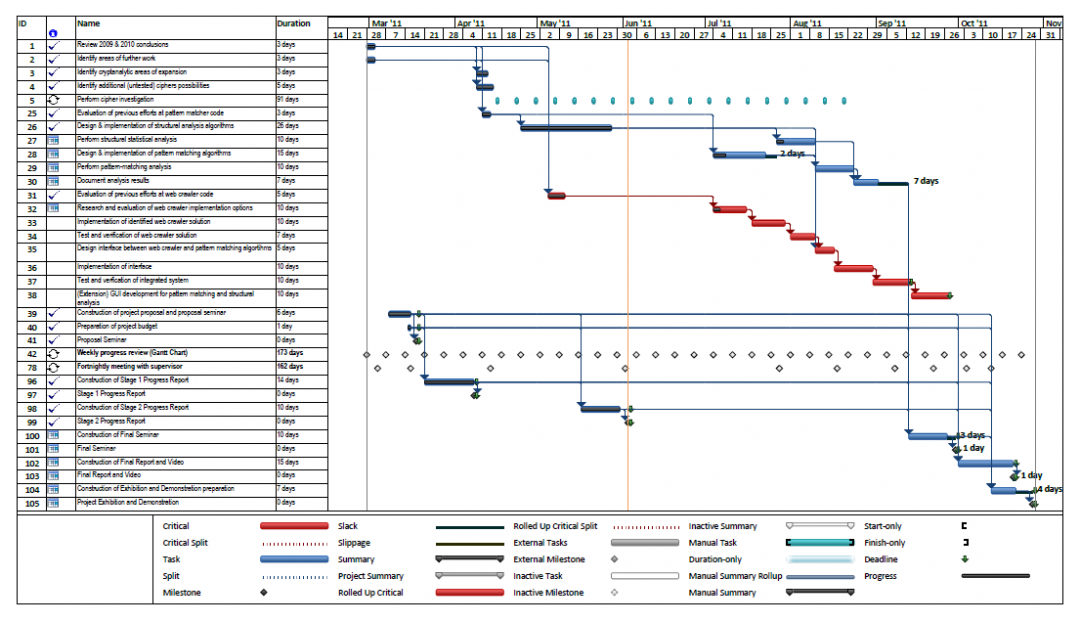

Gantt Chart

The project schedule revised to the current stage of development is shown in the Gantt chart above. Progress and planned dates are accurate as of June 3, 2011. After the submission of the Stage 2 Progress Report on June 3, the remaining project milestones are the Final Seminar (30 September), Final Report accompanied by a video (21 October) and the Project Exhibition including demonstrations (28 October).

The Gantt chart shows the first two project milestones, the Proposal Seminar and the Stage 1 Progress Report, were completed and delivered in accordance with the planned dates. The project team also anticipates the remaining semester 1 milestone, the Stage 2 Progress Report, will be successfully submitted. In addition to the milestones, the other work completed in accordance with the planned schedule has been extensive. This is evident in the relevant sections above.

Throughout the semester the schedule has been continuously monitored and revised. The most significant modification was to alter the schedule for the investigation of relevant cipher schemes. A weekly distribution requiring a minimum of one cipher per week to be investigated was preferred over a concentrated examination following the Stage 1 Progress Report. This allows associated testing software to be further refined as well as allowing other tasks to be completed in parallel to the cipher investigation. The designated schedule for the text analysis algorithms has also been changed since the Stage 1 Progress Report. In accordance with good programming practices, the pattern matching and statistical analysis algorithms are being designed, implemented and tested individually. The Gantt chart has been modified to reflect this, as opposed to the previously planned approach of all algorithms being designed, implemented and tested together. A task for documentation of the results has also been added to the schedule.

To summarise, project progress is beyond the original plan established in the Stage 1 Progress Report. It is the belief of both team members that the schedule alterations introduced for semester 2 coupled with the existing progress management strategy will ensure satisfactory progress is maintained and will be more than sufficient to achieve a successful outcome with the project.

Responsibility and Progress Breakdown

The following figure shows the breakdown of tasks between team members as well as an indication of current progress on each task. While the responsibility breakdown is not exclusive and both team members typically contribute to all sections, it gives an indication of the division of responsibilities.

Budget

In the proposal seminar and the Stage 1 Progress Report it was projected that the budget available to date would be $500 minus any reasonable incidental expenditure. Up to the current stage of development there has been no variation from the projected budget and no requirement for incidental expenditure. The available budget therefore remains at $500 provided by the University of Adelaide.

The planned budget for the second semester has been reviewed and adjustments have been made. Research conducted by Patrick in regard to the Somerton Man case revealed an exhibition at the Bay Discovery Centre in Glenelg. Both students intend viewing the display and the entry cost has been included as expenditure within the budget. The entry cost is a gold coin donation for each student. Furthermore with the project exhibition to be completed in the impending semester, a portion of the budget has been allocated towards additional presentation resources. Specifically for the printing of two additional A1 sized posters to accompany the one provided by the university. For ease and immediate accessibility, the University of Adelaide has been nominated as the supplier. In accordance with their pricing structure of $30 per A1 sheet, $60 has been allocated. The remainder of the budget will continue to be provisioned for incidental expenditure thus maintaining the flexibility and contingency already provided to this stage of development. The revised budget has been summarised in the table below.

| Budget Allocation | Amount |

| Bay Discovery Centre Exhibition | $4.00 |

| Project Exhibition Additional Printing | $60.00 |

| Incidental Expenditure | $436.00 |

| Total Allocated: | $500 |

| Total Provided: | $500 |

Risk Management

Project Risk Management

The project risk management plan developed for the proposal has been effective during semester 1. Creating a thorough list with individual management strategies has ensured these risks have had no influence on project progress. An analysis of each strategy’s performance is included in further detail below. At this stage no additional project related risks have needed to be added to the list presented in the Stage 1 Progress Report. These risks, their estimations and individual reduction strategies have been summarised in Table 4.

| Risk | Risk Estimation | Reduction Strategy |

| Availability of personnel | High | Regular meetings with flexible schedule |

| Insufficient financial resources | Medium | Open source software and project budget |

| Software development tool access | High | Suitable personal work environment on laptop for each team member |

| Unable to maintain software development schedule | Medium | Progress management strategy |

| The Somerton Man case is solved | Low | No risk reduction strategy |

During the previous semester, schedule conflict manifesting limited personnel availability has been avoided. Regular collaboration between the project team also mitigated any risk associated with external deadlines influencing project progress. It has therefore been concluded that this reduction strategy is functioning appropriately and does not need to be altered. As this particular risk reduction strategy also provides contingency for team member ill health, the original risk estimation of high will continue to be used.

The effectiveness of using the budget as the financial risk reduction mechanism was apparent throughout semester 1, as at no stage was progress restricted by financial impositions. Even with the introduction of new costs, the budget as indicated in the section above does not threaten to impose any developmental restrictions during the remaining project lifecycle. As a result, the risk estimation will not be changed and the budget will continue to be used as the risk reduction strategy.

There have been no issues of software development tool accessibility. In accordance with the risk reduction strategy, personal laptop computers were used in the situation no university resources were available. Upon the success of the strategy thus far both team members agree that modifications are not required. The high risk estimation remains valid given the continued relevance of the original concern of accessibility to specialised software whilst on the university campus.

During semester 1 all required project deliverables were submitted prior to their respective deadlines. In addition, software development is progressing as specified in Stage 1 Progress Report Gantt chart. This was seen as confirmation on the success of the Progress Management Strategy as the regulating mechanism. As such, it will continue to be used as the risk reduction strategy in semester 2. Given final submission is in the impeding semester, as detailed above in the Project Schedule, the risk estimation of software development falling behind the schedule remains valid. No change will be made at this time.

As stated in the Stage 1 Progress Report, no risk reduction strategy was introduced for the situation the Somerton Man case is solved. The uncontrollable nature of the risk’s origins combined with a broad project scope that ensures deliverables are capable beyond the Somerton Man case, the determining factors. This will continue to be the arrangement for semester 2. Furthermore the risk estimation of low remains valid since no solutions to the code were released during the first semester.

Both team members agree the project associated risks do not need to be reassessed at this stage of development. The decision has also been made to remain with the current risk management strategy given its successful operation during semester 1.

Occupational Health and Safety Risk Management

The OHS risk management strategy was effective throughout semester 1. Table 5 shown below summarises all current OHS risks and their respective estimations. Addition to the risks reported in the Stage 1 Design Document was not required during the semester. Also, no risk estimations needed to be revised.

For the management of physical hazards the stringent OHS policy requirements employed by the University of Adelaide has and will continue to be sufficient. In addition the position of the project working areas on the university campus remain adequately distant from the external construction noise to offset this hazard.

In the Stage 1 Progress Report, the two psychological hazards, stress and the repetition of tasks, were deemed as critical as the physical hazards. Each team member was responsible for implementing their own personalised risk management strategy to ensure psychological health was maintained. During the first half of the project there have been no instances of team members’ psychological states impacting project progress. The current arrangement will therefore continue to be used.

Use of the University of Adelaide Workstation Ergonomic guidelines to govern work place layout was effective in semester 1 for both project team members. A collective agreement was reached between both team members that this will continue to be adequate for mitigating ergonomic hazards in the remaining project lifecycle.

| Risk | Risk Estimation | ||

| Physical Hazards | |||

| External construction noise | Medium | ||

| Falls within laboratory | Medium | ||

| Injuries due to electrical shock | Medium | ||

| Chemical Hazards | |||

| None | - | ||

| Biological Hazards | |||

| None | - | ||

| Ergonomic Hazards | |||

| Work place layout | Low | ||

| Radiation Hazards | |||

| None | - | ||

| Psychological Hazards | |||

| Work related stress | Medium | ||

| Repetitive tasks | Medium | ||

As was the case with the project related risks, the OHS Risks will not be reassessed. Following previous success, the risk management strategy as detailed above will continue to be used to the end of the project lifecycle in October, provided the evaluated circumstances remain consistent. If a change does occur, the current strategy will be reviewed accordingly.

Summary

Both project team members are happy with the progress of the project thus far. The cryptanalytic side of the project has seen significant levels of work, with many ciphers being investigated and the introduction of the concept of creating a cipher GUI as a testing tool. The structural analysis of the Rubaiyat has been completed according to the design aims and any further work in this area will be an extension. The web crawler and pattern matcher side of the project is still in the early stages of development, however is proceeding according to plan. Some initial pattern matching functions have been completed and testing of web crawler implementation possibilities has provided great direction for future work.

Over the next period of the project, the final period, we are planning to continue the cipher analysis and complete the build of the Cipher GUI, as well as design, implement, integrate and test our web crawler and pattern matching applications. Project progress is thus far proceeding according to the Gantt chart schedule and all potential identified risks have so far been avoided. The budget is not limiting progress in any way and still has room for any cost surprises.

We are looking forward to bringing the project to a successful conclusion in the next period, with full expectation of meeting all deadlines and requirements.

References

- ↑ http://en.wikipedia.org/wiki/Taman_Shud_Case

- ↑ http://en.wikipedia.org/wiki/Taman_Shud_Case

- ↑ https://www.eleceng.adelaide.edu.au/personal/dabbott/wiki/index.php/Final_report_2009:_Who_killed_the_Somerton_man%3F

- ↑ https://www.eleceng.adelaide.edu.au/personal/dabbott/wiki/index.php/Final_Report_2010

- ↑ http://faculty.cs.byu.edu/~rodham/cs240/crawler/index.html

See also

- Cipher Cracking 2011

- Stage 1 Design Document 2011

- Cipher Cross-off List

- Timeline of the Taman Shud Case

- List of people connected to the Taman Shud Case

- List of facts on the Taman Shud Case that are often misreported

- List of facts we do know about the Somerton Man

- The Taman Shud Case Coronial Inquest

- Letter frequency plots

- Structural Features of the Code

- Markov models

- Primary source material on the Taman Shud Case

- Secondary source material on the Taman Shud Case

- Transition probabilities from selected texts

- Listed poems from The Rubaiyat of Omar Khayyam

- Using the Rubaiyat of Omar Khayyam as a one-time pad

- Using the King James Bible as a one-time pad

- Using the Revised Standard Edition Bible as a one-time pad

- Transitions within words

References and useful resources

- The taman shud case

- Edward Fitzgerald's translation of رباعیات عمر خیام by عمر خیام

- Adelaide Uni Library e-book collection

- Project Gutenburg e-books

- Foreign language e-books

- UN Declaration of Human Rights - different languages

- Statistical debunking of the 'Bible code'

- One time pads

- Analysis of criminal codes and ciphers

- Code breaking in law enforcement: A 400-year history

- Evolutionary algorithm for decryption of monoalphabetic homophonic substitution ciphers encoded as constraint satisfaction problems